Thursday, December 20, 2012

All Your Site Are Belong To Us

'Competitive enrollment' is exactly that.

This is a graph I tend to show frequently to my clients – it shows the relative enrollment rates for two groups of sites in a clinical trial we'd been working on. The blue line is the aggregate rate of the 60-odd sites that attended our enrollment workshop, while the green line tracks enrollment for the 30 sites that did not attend the workshop. As a whole, the attendees were better enrollers that the non-attendees, but the performance of both groups was declining.

Happily, the workshop produced an immediate and dramatic increase in the enrollment rate of the sites who participated in it – they not only rebounded, but they began enrolling at a better rate than ever before. Those sites that chose not to attend the workshop became our control group, and showed no change in their performance.

The other day, I wrote about ENACCT's pilot program to improve enrollment. Five oncology research sites participated in an intensive, highly customized program to identify and address the issues that stood in the way of enrolling more patients. The sites in general were highly enthused about the program, and felt it had a positive impact on the operations.

There was only one problem: enrollment didn't actually increase.

Here’s the data:

This raises an obvious question: how can we reconcile these disparate outcomes?

On the one hand, an intensive, multi-day, customized program showed no improvement in overall enrollment rates at the sites.

On the other, a one-day workshop with sixty sites (which addressed many of the same issues as the ENACCT pilot: communications, study awareness, site workflow, and patient relationships) resulted in and immediate and clear improvement in enrollment.

There are many possible answers to this question, but after a deeper dive into our own site data, I've become convinced that there is one primary driver at work: for all intents and purposes, site enrollment is a zero-sum game. Our workshop increased the accrual of patients into our study, but most of that increase came as a result of decreased enrollments in other studies at our sites.

Our workshop graph shows increased enrollment ... for one study. The ENACCT data is across all studies at each site. It stands to reason that if sites are already operating at or near their maximum capacity, then the only way to improve enrollment for your trial is to get the sites to care more about your trial than about other trials that they’re also participating in.

And that makes sense: many of the strategies and techniques that my team uses to increase enrollment are measurably effective, but there is no reason to believe that they result in permanent, structural changes to the sites we work with. We don’t redesign their internal processes; we simply work hard to make our sites like us and want to work with us, which results in higher enrollment. But only for our trials.

So the next time you see declining enrollment in one of your trials, your best bet is not that the patients have disappeared, but rather that your sites' attention has wandered elsewhere.

Tuesday, December 11, 2012

What (If Anything) Improves Site Enrollment Performance?

Here are the monthly clinical trial accruals at each of the 5 sites. The dashed lines mark when the pilots were implemented:

4 of the 5 sites showed no discernible improvement. The one site that did show increasing enrollment appears to have been improving before any of the interventions kicked in.

This is a painful but important result for anyone involved in clinical research today, because the improvements put in place through the NCCTBC process were the product of an intensive, customized approach. Each site had 3 multi-day learning sessions to map out and test specific improvements to their internal communications and processes (a total of 52 hours of workshops). In addition, each site was provided tracking tools and assigned a coach to assist them with specific accrual issues.

That’s an extremely large investment of time and expertise for each site. If the results had been positive, it would have been difficult to project how NCCTBC could be scaled up to work at the thousands of research sites across the country. Unfortunately, we don’t even have that problem: the needle simple did not move.

While ENACCT plans a second round of pilot sites, I think we need to face a more sobering reality: we cannot squeeze more patients out of sites through training and process improvements. It is widely believed in the clinical research industry that sites are low-efficiency bottlenecks in the enrollment process. If we could just "fix" them, the thinking goes – streamline their workflow, improve their motivation – we could quickly improve the speed at which our trials complete. The data from the NCCTBC paints an entirely different picture, though. It shows us that even when we pour large amounts of time and effort into a tailored program of "evidence and practice-based changes", our enrollment ROI may be nonexistent.

I applaud the ENACCT team for this pilot, and especially for sharing the full monthly enrollment totals at each site. This data should cause clinical development teams everywhere to pause and reassess their beliefs about site enrollment performance and how to improve it.

Friday, November 16, 2012

The Accuracy of Patient Reported Diagnoses

Novelist Phillip Roth recently got embroiled in a small spat with the editors of Wikipedia regarding the background inspiration for one of his books. After a colleague attempted to correct the entry for The Human Stain on Roth's behalf, he received the following reply from a Wikipedia editor:

I understand your point that the author is the greatest authority on their own work, but we require secondary sources.

|

| Report: 0% of decapitees could accurately recall their diagnosis |

While recent FDA guidance has helped to solidify our approaches to incorporating PROs into traditionally-structured clinical trials, there are still a number of open questions about how far we can go with relying exclusively on what patients tell us about their medical conditions. These questions come to the forefront when we consider the potential of "direct to patient" clinical trials, such as the recently-discontinued REMOTE trial from Pfizer, a pilot study that attempted to assess the feasibility of conducting a clinical trial without the use of local physician investigators.

Among other questions, the REMOTE trial forces us to ask: without physician assessment, how do we know the patients we recruit even have the condition being studied? And if we need more detailed medical data, how easy will it be to obtain from their regular physicians? Unfortunately, that study ended due to lack of enrollment, and Pfizer has not been particularly communicative about any lessons learned.

Luckily for the rest of us, at least one CRO, Quintiles, is taking steps to methodically address and provide data for some of these questions. They are moving forward with what appears to be a small series of studies that assess the feasibility and accuracy of information collected in the direct-to-patient arena. Their first step is a small pilot study of 50 patients with self-reported gout, conducted by both Quintiles and Outcomes Health Information Services. The two companies have jointly published their data in the open-access Journal of Medical Internet Research.

(Before getting into the article's content, let me just emphatically state: kudos to the Quintiles and Outcomes teams for submitting their work to peer review, and to publication in an open access journal. Our industry needs much, much more of this kind of collaboration and commitment to transparency.)

The study itself is fairly straightforward: 50 patients were enrolled (out of 1250 US patients who were already in a Quintiles patient database with self-reported gout) and asked to complete an online questionnaire as well as permit access to their medical records.

The twin goals of the study were to assess the feasibility of collecting the patients' existing medical records and to determine the accuracy of the patients' self-reported diagnosis of gout.

To obtain patients' medical records, the study team used a belt-and-suspenders approach: first, the patients provided an electronic release along with their physicians' contact information. Then, a paper release form was also mailed to the patients, to be used as backup if the electronic release was insufficient.

To me, the results from the attempt at obtaining the medical records is actually the most interesting part of the study, since this is going to be an issue in pretty much every DTP trial that's attempted. Although the numbers are obviously quite small, the results are at least mildly encouraging:

- 38 Charts Received

- 28 required electronic release only

- 10 required paper release

- 12 Charts Not Received

- 8 no chart mailed in time

- 2 physician required paper release, patient did not provide

- 2 physician refused

If the electronic release had been used on its own, 28 charts (56%) would have been available. Adding the suspenders of a follow-up paper form increased the total to respectable 76%. The authors do not mention how aggressively they pursued obtaining the records from physicians, nor how long they waited before giving up, so it's difficult to determine how many of the 8 charts that went past the deadline could also potentially have been recovered.

Of the 38 charts received, 35 (92%) had direct confirmation of a gout diagnosis and 2 had indirect confirmation (a reference to gout medication). Only 1 chart had no evidence for or against a diagnosis. So it is fair to conclude that these patients were highly reliable, at least insofar as their report of receiving a prior diagnosis of gout was concerned.

In some ways, though, this represents a pretty optimistic case. Most of these patients had been living with gout for many year, and "gout" is a relatively easy thing to remember. Patients were not asked questions about the type of gout they had or any other details that might have been checked against their records.

The authors note that they "believe [this] to be the first direct-to-patient research study involving collection of patient-reported outcomes data and clinical information extracted from patient medical records." However, I think it's very worthwhile to bring up comparison with this study, published almost 20 years ago in the Annals of the Rheumatic Diseases. In that (pre-internet) study, researchers mailed a survey to 472 patients who had visited a rheumatology clinic 6 months previously. They were therefore able to match all of the survey responses with an existing medical record, and compare the patients' self-reported diagnoses in much the same way as the current study. Studying a more complex set of diseases (arthritis), the 1995 paper paints a more complex picture: patient accuracy varied considerably depending on their disease: from very accurate (100% for those suffering from ankylosing spondylitis, 90% for rheumatoid arthritis) to not very exact at all (about 50% for psoriatic and osteo arthritis).

Interestingly, the Quintiles/Outcomes paper references a larger ongoing study in rheumatoid arthritis as well, which may introduce some of the complexity seen in the 1995 research.

Overall, I think this pilot does exactly what it set out to do: it gives us a sense of how patients and physicians will react to this type of research, and helps us better refine approaches for larger-scale investigations. I look forward to hearing more from this team.

Also cited: I Rasooly, et al., Comparison of clinical and self reported diagnosis for rheumatology outpatients, Annals of the Rheumatic Diseases 1995 DOI:10.1136/ard.54.10.850

Image courtesy Flickr user stevekwandotcom.

Tuesday, October 2, 2012

Decluttering the Dashboard

It’s Gresham’s Law for clinical trial metrics: Bad data drives out good. Here are 4 steps you can take to fix it.

Many years ago, when I was working in the world of technology startups, one “serial entrepreneur” told me about a technique he had used when raising investor capital for his new database firm: since his company marketed itself as having cutting-edge computing algorithms, he went out and purchased a bunch of small, flashing LED lights and simply glued them onto the company’s servers. When the venture capital folks came out for due diligence meetings, they were provided a dramatic view into the darkened server room, brilliantly lit up by the servers’ energetic flashing. It was the highlight of the visit, and got everyone’s investment enthusiasm ratcheted up a notch.

|

| The clinical trials dashboard is a candy store: bright, vivid, attractive ... and devoid of nutritional value. |

And an impressive system it was, chock full of bubble charts and histograms and sliders. For a moment, I felt like a kid in a candy store. So much great stuff ... how to choose?

Then the presenter told a story: on a recent trial, a data manager in Italy, reviewing the analytics dashboard, alerted the study team to the fact that there was an enrollment imbalance in Japan, with one site enrolling all of the patients in that country. This was presented as a success story for the system: it linked up disparate teams across the globe to improve study quality.

But to me, this was a small horror story: the dashboard had gotten so cluttered that key performance issues were being completely missed by the core operations team. The fact that a distant data manager had caught the issue was a lucky break, certainly, but one that should have set off alarm bells about how important signals were being overwhelmed by the noise of charts and dials and “advanced visualizations”.

|

| Swamped with high-precision trivia |

It’s Gresham’s Law for clinical trial metrics: Bad data drives out good. Bad data – samples sliced so thin they’ve lost significance, histograms of marginal utility made “interesting” (and nearly unreadable) by 3-D rendering, performance grades that have never been properly validated. Bad data is plentiful and much, much easier to obtain than good data.

So what can we do? Here are 4 initial steps to decluttering the dashboard:

1. Abandon “Actionable Analytics”

Everybody today sells their analytics as “actionable” [including, to be fair, even one company’s website that the author himself may be guilty of drafting]. The problem though is that any piece of data – no matter how tenuous and insubstantial -- can be made actionable. We can always think of some situation where an action might be influenced by it, so we decide to keep it. As a result, we end up swamped with high-precision trivia (Dr. Smith is enrolling at the 82nd percentile among UK sites!) that do not influence important decisions but compete for our attention. We need to stop reporting data simply because it’s there and we can report it.

2. Identify Key Decisions First

The above process (which seems pretty standard nowadays) is backwards. We look at the data we have, and ask ourselves whether it’s useful. Instead, we need to follow a more disciplined process of first asking ourselves what decisions we need to make, and when we need to make them. For example:

- When is the earliest we will consider deactivating a site due to non-enrollment?

- On what schedule, and for which reasons, will senior management contact individual sites?

- At what threshold will imbalances in safety data trigger more thorough investigation?

Every trial will have different answers to these questions. Therefore, the data collected and displayed will also need to be different. It is important to invest time and effort to identify critical benchmarks and decision points, specific to the needs of the study at hand, before building out the dashboard.

3. Recognize and Respect Context

As some of the questions about make clear, many important decisions are time-dependent. Often, determining when you need to know something is every bit as important as determining what you want to know. Too many dashboards keep data permanently anchored over the course of the entire trial even though it's only useful during a certain window. For example, a chart showing site activation progress compared to benchmarks should no longer be competing for attention on the front of a dashboard after all sites are up and running – it will still be important information for the next trial, but for managing this trial now, it should no longer be something the entire team reviews regularly.

In addition to changing over time, dashboards should be thoughtfully tailored to major audiences. If the protocol manager, medical monitor, CRAs, data managers, and senior executives are all looking at the same dashboard, then it’s a dead certainty that many users are viewing information that is not critical to their job function. While it isn't always necessary to develop a unique topline view for every user, it is worthwhile to identify the 3 or 4 major user types, and provide them with their own dashboards (so the person responsible for tracking enrollment in Japan is in a position to immediately see an imbalance).

4. Give your Data Depth

Many people – myself included – are reluctant to part with any data. We want more information about study performance, not less. While this isn't a bad thing to want, it does contribute to the tendency to cram as much as possible into the dashboard.

The solution is not to get rid of useful data, but to bury it. Many reporting systems have the ability to drill down into multiple layers of information: this capability should be thoughtfully (but aggressively!) used to deprioritize all of your useful-but-not-critical data, moving it off the dashboard and into secondary pages.

Bottom Line

The good news is that access to operational data is becoming easier to aggregate and monitor every day. The bad news is that our current systems are not designed to handle the flood of new information, and instead have become choked with visually-appealing-but-insubstantial chart candy. If we want to have any hope of getting a decent return on our investment from these systems, we need to take a couple steps back and determine: what's our operational strategy, and who needs what data, when, in order to successfully execute against it?

Thursday, August 16, 2012

Clinical Trial Alerts: Nuisance or Annoyance?

Will physicians change their answers when tired of alerts?

I am an enormous fan of electronic health records (EMRs). Or rather, more precisely, I am an enormous fan of what EMRs will someday become – current versions tend to leave a lot to be desired. Reaction to these systems among physicians I’ve spoken with has generally ranged from "annoying" to "f*$%#^ annoying", and my experience does not seem to be at all unique.

The (eventual) promise of EMRs in identifying eligible clinical trial participants is twofold:

First, we should be able to query existing patient data to identify a set of patients who closely match the inclusion and exclusion criteria for a given clinical trial. In reality, however, many EMRs are not easy to query, and the data inside them isn’t as well-structured as you might think. (The phenomenon of "shovelware" – masses of paper records scanned and dumped into the system as quickly and cheaply as possible – has been greatly exacerbated by governments providing financial incentives for the immediate adoption of EMRs.)

Second, we should be able to identify potential patients when they’re physically at the clinic for a visit, which is really the best possible moment. Hence the Clinical Trial Alert (CTA): a pop-up or other notification within the EMR that the patient may be eligible for a trial. The major issue with CTAs is the annoyance factor – physicians tend to feel that they disrupt their natural clinical routine, making each patient visit less efficient. Multiple alerts per patient can be especially frustrating, resulting in "alert overload".

A very intriguing study recently in the Journal of the American Medical Informatics Association looked to measure a related issue: alert fatigue, or the tendency for CTAs to lose their effectiveness over time. The response rate to the alerts definitely decreased steadily over time, but the authors were mildly optimistic in their assessment, noting that response rate was still respectable after 36 weeks – somewhere around 30%:

However, what really struck me here is that the referral rate – the rate at which the alert was triggered to bring in a research coordinator – dropped much more precipitously than the response rate:

This is remarkable considering that the alert consisted of only two yes/no questions. Answering either question was considered a "response", and answering "yes" to both questions was considered a "referral".

- Did the patient have a stroke/TIA in the last 6 months?

- Is the patient willing to undergo further screening with the research coordinator?

The only plausible explanation for referrals to drop faster than responses is that repeated exposure to the CTA lead the physicians to more frequently mark the patients as unwilling to participate. (This was not actual patient fatigue: the few patients who were the subject of multiple CTAs had their second alert removed from the analysis.)

So, it appears that some physicians remained nominally compliant with the system, but avoided the extra work involved in discussing a clinical trial option by simply marking the patient as uninterested. This has some interesting implications for how we track physician interaction with EMRs and CTAs, as basic compliance metrics may be undermined by users tending towards a path of least resistance.

Sunday, July 15, 2012

Site Enrollment Performance: A Better View

Consider this graphic, showing enrolled patients at each site. It came through on a weekly "Site Newsletter" for a trial I was working on:

I chose this histogram not because it’s particularly bad, but because it’s supremely typical. Don’t get me wrong ... it’s really bad, but the important thing here is that it looks pretty much exactly like every site enrollment histogram in every study I’ve ever worked on.

This is a wasted opportunity. Whether we look at per-site enrollment with internal teams to develop enrollment support plans, or share this data with our sites to inform and motivate them, a good chart is one of the best tools we have. To illustrate this, let’s look at a few examples of better ways to look at the data.

This chart improves on the standard histogram in a few important ways:

- It looks better. This is not a minor point when part of our work is to engage sites and makes them feel like they are part of something important. Actually, this graph is made clearer and more appealing mostly by the removal of useless attributes (extraneous whitespace, background colors, and unhelpful labels).

- It adds patient disposition information. Many graphs – like the one at the beginning of this post – are vague about who is being counted. Does "enrolled" include patients currently being screened, or just those randomized? Interpretations will vary from reader to reader. Instead, this chart makes patient status an explicit variable, without adding to the complexity of the presentation. It also provides a bit of information about recent performance, by showing patients who have been consented but not yet fully screened.

- It ranks sites by their total contribution to the study, not by the letters in the investigator’s name. And that is one of the main reasons we like to share this information with our sites in the first place.

There are many other ways in which essentially the same data can be re-sliced or restructured to underscore particular trends or messages. Here are two that I look at frequently, and often find worth sharing.

Then versus Now

This tornado chart is an excellent way of showing site-level enrollment trajectory, with each sites prior (left) and subsequent (right) contributions separated out. This example spotlights activity over the past month, but for slower trials a larger timescale may be more appropriate. Also, how the data is sorted can be critical in the communication: this could have been ranked by total enrollment, but instead sorts first on most-recent screening, clearly showing who’s picked up, who’s dropped off, and who’s remained constant (both good and bad).

This is especially useful when looking at a major event (e.g., pre/post protocol amendment), or where enrollment is expected to have natural fluctuations (e.g., in seasonal conditions).

Net Patient Contribution

In many trials, site activation occurs in a more or less "rolling" fashion, with many sites not starting until later in the enrollment period. This makes simple enrollment histograms downright misleading, as they fail to differentiate sites by the length of time they’ve actually been able to enroll. Reporting enrollment rates (patients per site per month) is one straightforward way of compensating for this, but it has the unfortunate effect of showing extreme (and, most importantly, non-predictive), variance for sites that have not been enrolling for very long.

As a result, I prefer to measure each site in terms of its net contribution to enrollment, compared to what it was expected to do over the time it was open:

To clarify this, consider an example: A study expects sites to screen 1 patient per month. Both Site A and Site B have failed to screen a single patient so far, but Site A has been active for 6 months, whereas Site B has only been active 1 month.

On an enrollment histogram, both sites would show up as tied at 0. However, Site A’s 0 is a lot more problematic – and predictive of future performance – than Site B’s 0. If I compare them to benchmark, then I show how many total screenings each site is below the study’s expectation: Site A is at -6, and Site B is only -1, a much clearer representation of current performance.

This graphic has the added advantage of showing how the study as a whole is doing. Comparing the total volume of positive to negative bars gives the viewer an immediate visceral sense of whether the study is above or below expectations.

The above are just 3 examples – there is a lot more that can be done with this data. What is most important is that we first stop and think about what we’re trying to communicate, and then design clear, informative, and attractive graphics to help us do that.

Wednesday, June 20, 2012

Faster Trials are Better Trials

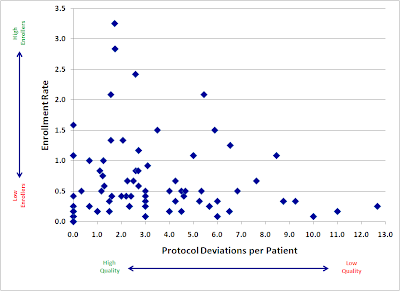

When considering clinical data collected from sites, what is the relationship between these two factors?

- Quantity: the number of patients enrolled by the site

- Quality: the rate of data issues per enrolled patient

Obviously, this has serious implications for those of us in the business of accelerating clinical trials. If getting studies done faster comes at the expense of clinical data quality, then the value of the entire enterprise is called into question. As regulatory authorities take an increasingly skeptical attitude towards missing, inconsistent, and inaccurate data, we must strive to make data collection better, and absolutely cannot afford to risk making it worse.

As a result, we've started to look closely at a variety of data quality metrics to understand how they relate to the pace of patient recruitment. The results, while still preliminary, are encouraging.

Here is a plot of a large, recently-completed trial. Each point represents an individual research site, mapped by both speed (enrollment rate) and quality (protocol deviations). If faster enrolling caused data quality problems, we would expect to see a cluster of sites in the upper right quadrant (lots of patients, lots of deviations).

Instead, we see almost the opposite. Our sites with the fastest accrual produced, in general, higher quality data. Slow sites had a large variance, with not much relation to quality: some did well, but some of the worst offenders were among the slowest enrollers.

There are probably a number of reasons for this trend. I believe the two major factors at work here are:

- Focus. Having more patients in a particular study gives sites a powerful incentive to focus more time and effort into the conduct of that study.

- Practice. We get better at most things through practice and repetition. Enrolling more patients may help our site staff develop a much greater mastery of the study protocol.

We will continue to explore the relationship between enrollment and various quality metrics, and I hope to be able to share more soon.

Tuesday, June 19, 2012

Pfizer Shocker: Patient Recruitment is Hard

The trial piloted a number of innovations, including some novel and intriguing Patient Reported Outcome (PRO) tools. Unfortunately, most of these will likely not have been given the benefit of a full test, as the trial was killed due to low patient enrollment.

The fact that a trial designed to enroll less than 300 patients couldn’t meet its enrollment goal is sobering enough, but in this case the pain is even greater due to the fact that the study was not limited to site databases and/or catchment areas. In theory, anyone with overactive bladder in the entire United States was a potential participant.

And yet, it didn’t work. In a previous interview with Pharmalot, Pfizer’s Craig Lipset mentions a number of recruitment channels – he specifically cites Facebook, Google, Patients Like Me, and Inspire, along with other unspecified “online outreach” – that drove “thousands” of impressions and “many” registrations, but these did not amount to, apparently, even close to the required number of consented patients.

Two major questions come to mind:

1. How were patients “converted” into the study? One of the more challenging aspects of patient recruitment is often getting research sites engaged in the process. Many – perhaps most – patients are understandably on the fence about being in a trial, and the investigator and study coordinator play the single most critical role in helping each patient make their decision. You cannot simply replace their skill and experience with a website (or “multi-media informed consent module”).

2. Did they understand the patient funnel? I am puzzled by the mention of “thousands of hits” to the website. That may seem like a lot, if you’re not used to engaging patients online, but it’s actually not necessarily so.

|

| Jakob Nielsen's famous "Lurker Funnel" seems worth mentioning here... |

In the prior interview, Lipset says:

I think some of the staunch advocates for using online and social media for recruitment are still reticent to claim silver bullet status and not use conventional channels in parallel. Even the most aggressive and bullish social media advocates, generally, still acknowledge you’re going to do this in addition to, and not instead of more conventional channels.

This makes Pfizer’s exclusive reliance on these channels all the more puzzling. If no one is advocating disintermediating the sites and using only social media, then why was this the strategy?

I am confident that someone will try again with this type of trial in the near future. Hopefully, the Pfizer experience will spur them to invest in building a more rigorous recruitment strategy before they start.

[Update 6/20: Lipset weighed in via the comments section of the Pharmalot article above to clarify that other DTP aspects of the trial were tested and "worked VERY well". I am not sure how to evaluate that clarification, given the fact that those aspects couldn't have been tested on a very large number of patients, but it is encouraging to hear that more positive experiences may have come out of the study.]

Wednesday, January 4, 2012

Public Reporting of Patient Recruitment?

A few years back, I was working with a small biotech companies as they were ramping up to begin their first-ever pivotal trial. One of the team leads had just produced a timeline for enrollment in the trial, which was being circulated for feedback. Seeing as they had never conducted a trial of this size before, I was curious about how he had arrived at his estimate. My bigger clients had data from prior trials (both their own and their

He proudly shared with me the secret of his methodology: he had looked up some comparable studies on ClinicalTrials.gov, counted the number of listed sites, and then compared that to the sample size and start/end dates to arrive at an enrollment rate for each study. He’d then used the average of all those rates to determine how long his study would take to complete.

If you’ve ever used ClinicalTrials.gov in your work, you can immediately determine the multiple, fatal flaws in that line of reasoning. The data simply doesn’t work like that. And to be fair, it wasn’t designed to work like that: the registry is intended to provide public access to what research is being done, not provide competitive intelligence on patient recruitment.

I’m therefore sympathetic, but skeptical, of a recent article in PLoS Medicine, Disclosure of Investigators' Recruitment Performance in Multicenter Clinical Trials: A Further Step for Research Transparency, that proposes to make reporting of enrollment a mandatory part of the trial registry. The authors would like to see not only actual randomized patients for each principal investigator, but also how that compares to their “recruitment target”.

The entire article is thought-provoking and worth a read. The authors’ main arguments in favor of mandatory recruitment reporting can be boiled down to:

- Recruitment is many trials is poor, and public disclosure of recruitment performance will improve it

- Sponsors, patient groups, and other stakeholders will be interested in the information

- The data “could prompt queries” from other investigators

Image: Philip Johnson's Glass House from Staib via Wikimedia Commons.

Sunday, April 24, 2011

Social Networking for Clinical Research

However, the negative results published today in Nature Biotechnology on a groundbreaking trial in ALS deserve to be celebrated. The trial was conducted exclusively through PatientsLikeMe, the online medical social network that serves as a forum for patients in all disease areas to “share real-world health experiences.”

According to a very nice write-up in the Wall Street Journal, the trial was conceived and initiated by ALS patients who were part of the PatientsLikeMe ALS site:

Jamie Heywood, chairman and co-founder of PatientsLikeMe, said the idea for the new study came from patients. After the 2008 paper reporting lithium slowed down the disease in 16 ALS patients, some members of the site suggested posting their experiences with the drug in an online spreadsheet to figure out if it was working. PatientsLikeMe offered instead to run a more rigorous observational study with members of the network to increase chances of getting a valid result.The study included standardized symptom reporting from 596 patients (149 taking lithium and 447 matched controls). After 9 months, the patients taking lithium showed almost no difference in ALS symptoms compared to their controls, and preliminary (negative) results were released in late 2008. Although the trial was not randomized and not blinded – significant methodological issues, to be sure – it is still exciting for a number of reasons.

First, the study was conducted at an incredible rate of speed. Only 9 months elapsed between PatientsLikeMe deploying its tool to users and the release of topline results. In contrast, 2 more traditional, controlled clinical trials that were initiated to verify the first study’s results had not even managed to enroll their first patient during that time. In many cases like this – especially looking at new uses of established, generic drugs – private industry has little incentive to conduct an expensive trial. And academic researchers tend to move a pace that, while not quite glacial, is not as rapid as acutely-suffering patients would like.

(The only concern I have about speed is the time it took to get this paper published. Why was there a 2+ year gap between results and publication?)

Second, this trial represents one of the best uses of “off-label” patient experience that I know of. Many of the physicians I talk to struggle with off-label, patient-initiated treatment: they cannot support it, but it is difficult to argue with a patient when there is so little hard evidence. This trial represents an intelligent path towards tapping into and systematically organizing some of the thousands of individual off-label experiences and producing something clinically useful. As the authors state in the Nature paper:

Positive results from phase 1 and phase 2 trials can lead to changes in patient behavior, particularly when a drug is readily available. [...] The ongoing availability of a surveillance mechanism such as ours might help provide evidence to support or refute self-experimentation.Ironically, the fact that the trial found no benefit for lithium may have the most far-reaching benefit. A positive trial would have been open to criticism for its inability to compensate for placebo effect. These results run counter to expected placebo effect, lending strong support to the conclusion that it was thoughtfully designed and conducted. I hope this will be immense encouragement to others looking to take this method forward.

A lot has been written over the past 3-4 years about the enormous power of social media to change healthcare as we know it. In general, I have been skeptical of most of these claims, as most of them fail to plausibly explain the connection between "Lots of people on Facebook" and "Improved clinical outcomes". I applaud the patients and staff at PatientsLikeMe for finding a way to work together to break new ground in this area.

Monday, March 21, 2011

From Russia with (3 to 20 times more) Love

NPR Marketplace Health Desk Reporter Gregory Warner uncovers the truths about clinical trials in Russia; namely, the ability for biopharmaceutical companies to enroll patients 3 to 20 times faster than in the more established regions of North America and Western Europe.Of course, as you might expect, the NPR reporter does not “uncover” that – rather, the 3 to 20 times faster “truth” is simply a verbatim statement from the CEO of ClinStar, a CRO specializing in running trials in Russia and Eastern Europe. There is no explanation of the 3-to-20 number, or why there is such a wide confidence interval (if that’s what that is).

The full NPR story goes on to hint that the business of Russian clinical trials may be a bit on the ethically cloudy side by associating it with past practices of lavishing gifts and attention on leading physicians (no direct tie is made – the reporter however not so subtly notes the fact that one person who used to work in Russia as a drug rep now works in clinical trials). I think the implication here is that Russia gets results by any means necessary, and the pharma industry is excitedly queuing up to get its trials done faster.

However, this speed factor is coupled with the extremely modest claim that clinical trial business in Russia is “growing at 15% a years.” While this is certainly not a bad rate of growth, it’s hardly explosive. It’s in fact comparable to the revenue growth of the overall CRO market for the few years preceding the current downturn, estimated at 12.2%, and dwarfed by the estimated 34% annual growth of the industry in India.

From my perspective, the industry seems very hesitant to put too many eggs in Eastern Europe’s basket just yet. We need faster trials, certainly, but we need reliable and clean data even more. Recent troubling research experience with Russia -- most notably the dimebon fiasco, where overwhelming positive data in Russian phase 2 trials have turned out to be completely irreproducible in larger western trials –has left the industry wary about the region. And wink-and-nod publicity about incredible speed gains probably will ultimately hurt wider acceptance of Eastern European trials more than it will help.

Tuesday, March 1, 2011

What is the Optimal Rate of Clinical Trial Participation?

Of critical concern is the fact that despite numerous years of discussion and the implementation of new federal and state policies, very few Americans actually take part in clinical trials, especially those at greatest risk for disease. Of the estimated 80,000 clinical trials that are conducted every year in the U.S., only 2.3 million Americans take part in these research studies -- or less than one percent of the entire U.S. population.The paper goes on to discuss the underrepresentation of minority populations in clinical trials, and does not return to this point. And while it's certainly not central to the paper's thesis (in fact, in some ways it works against it), it is a perception that certainly appears to a common one among those involved in clinical research.

When we say that "only" 2.3 million Americans take part in clinical research, we rely directly on an assumption that more than 2.3 million Americans should take part.

This leads immediately to the question: how many more?

If we are trying to increase participation rates, the magnitude of the desired improvement is one of the first and most central facts we need. Do we want a 10% increase, or a 10-fold increase? The steps required to achieve these will be radically different, so it would seem important to know.

It should also be pointed out: in some very real sense, the ideal rate of clinical trial participation, at least for pre-marketing trials, is 0%. Participating in these trial by definition means being potentially exposed to a treatment that the FDA believes has insufficient evidence of safety and/or efficacy. In an ideal world, we would not expose any patient to that risk. Even in today's non-ideal world, we have already decided not to expose any patients to medication that have not produced some preliminary evidence of safety and efficacy in animals. That is, we have already established one threshold below which we believe human involvement is unacceptably risky -- in a better world, with more information, we would raise that threshold much higher than the current criteria for IND approval.

This is not just a hypothetical concern. Where we set our threshold for acceptable risk should drive much of our thinking about how much we want to encourage (or discourage) people from shouldering that risk. Landmine detection, for example, is a noble but risky profession: we may agree that it is acceptable for rational adults to choose to enter into that field, and we may certainly applaud their heroism. However, that does not mean that we will unanimously agree on how many adults should be urged to join their ranks, nor does it mean that we will not strive and hope for the day that no human is exposed to that risk.

So, we're not talking about the ideal rate of participation, we're talking about the optimal rate. How many people should get involved, given a) the risks involved in being exposed to investigational treatment, against b) the potential benefit to the participant and/or mankind? For how many will the expected potential benefit outweigh the expected total cost? I have not seen any systematic attempt to answer this question.

The first thing that should be obvious here is that the optimal rate of participation should vary based upon the severity of the disease and the available, approved medications to treat it. In nonserious conditions (eg, keratosis pilaris), and/or conditions with a very good recovery rate (eg, veisalgia), we should expect participation rates to be low, and in some cases close to zero in the absence of major potential benefit. Conversely, we should desire higher participation rates in fatal conditions with few if any legitimate treatment alternatives (eg, late-stage metastatic cancers). In fact, if we surveyed actual participation rates by disease severity and prognosis, I think we would find that this relationship generally holds true already.

I should qualify the above by noting that it really doesn't apply to a number of clinical trial designs, most notably observational trials and phase 1 studies in healthy volunteers. Of course, most of the discussion around clinical trial participation does not apply to these types of trials, either, as they are mostly focused on access to novel treatments.